Are you struggling to determine which changes to your website will improve its search engine rankings and user engagement?

A/B testing, a powerful method to compare two web page versions and measure their performance, is the solution you need.

In SEO, where even small tweaks can make a significant impact, A/B testing allows you to make data-driven decisions that can enhance your site’s visibility and effectiveness.

In this post, we’ll discuss the essentials of A/B testing in SEO, from understanding its importance to implementing successful tests, ensuring you maximize your website’s potential.

So, without any further ado, let’s get started.

1 What is A/B Testing?

A/B testing, also known as split testing, is a method used to compare two versions of a webpage or other user experience to determine which one performs better.

By presenting version A (the control) to one group of users and version B (the variation) to another group, you can analyze how each version impacts user behaviour based on predefined metrics, such as click-through rates, conversion rates, or time spent on the page.

For instance, if you run an e-commerce website, you can test two different headlines for a product page to see which one leads to more purchases. Version A might have a headline that reads High-Quality Shoes at Affordable Prices, while version B can read Exclusive Discounts on Top-Brand Shoes.

By monitoring which headline generates more sales, you can make an informed decision about which version to implement more broadly. This approach helps you to make data-driven decisions, optimizing the content and design to better meet user needs and achieve specific goals.

2 Why Should You Run A/B Tests?

Running A/B tests is essential for optimizing your website and enhancing its performance based on real user data.

These tests allow you to make informed decisions by comparing different versions of a webpage to see which one resonates more with your audience.

By identifying the most effective elements—be it headlines, images, call-to-action buttons, or overall design—you can significantly improve key metrics such as conversion rates, click-through rates, and user engagement.

A/B testing minimizes guesswork and reduces the risk of implementing changes that can negatively impact your site’s performance. It provides actionable insights that help fine-tune your SEO strategies, ensuring that your efforts lead to measurable improvements.

3 Examples of Elements to A/B Test

When conducting A/B testing for SEO, selecting the right elements to test can significantly impact the effectiveness of your optimization efforts.

Here are some examples of elements you can consider testing:

- Title tags: Title tags are crucial as they influence click-through rates from search engine results pages.

- Meta descriptions: Meta descriptions, though not directly affecting rankings, can enhance click-through rates by providing compelling summaries.

- Headings: Headings help structure content and improve readability, making them important for user experience and SEO.

- Call-to-action: Call-to-action (CTA) buttons are key conversion elements, and their text, colour, size, and placement can all be tested for optimal performance.

- Layout and Design: Additionally, the overall content layout and design, including the use of images and videos, play a significant role in retaining user attention and reducing bounce rates.

- Email Copy: Email copy is an important element, as variations in wording, tone, and length can affect how recipients perceive and respond to your messages.

- Email Subject Lines: Email subject lines are particularly important since they directly influence open rates; testing different phrases and formats can reveal what prompts more users to open the email.

- Product Page Layouts: Product page layouts are another key area for testing, as the arrangement of information, images, and call-to-action buttons can affect user experience and purchasing decisions.

By systematically testing these elements, you can gather valuable insights into what works best for your audience and make data-driven decisions to optimize your website for both users and search engines.

4 Best Practices for A/B Testing

Let us now discuss the best practices that you can follow for A/B testing.

4.1 Segment Your Audience Appropriately

Segmenting your audience appropriately is an important step in A/B testing for SEO because it ensures that the insights you gain are relevant and actionable for specific user groups.

Different segments of your audience may interact with your website in unique ways, influenced by factors such as demographics, behaviour, device type, or geographic location.

By dividing your audience into distinct groups, you can create your tests to address the specific needs and preferences of each segment, leading to more precise and meaningful results.

For instance, if you’re running an A/B test on an online retail site, you can segment your audience by device type—comparing the behaviour of mobile users versus desktop users.

Mobile users may respond differently to page layout changes or navigation tweaks than desktop users.

Running separate A/B tests for each segment can help you optimize the user experience more effectively for both groups.

4.2 Don’t Cloack the Test Pages

Avoiding the cloaking of test pages in A/B testing for SEO helps to maintain transparency and adhere to search engine policies.

Cloaking involves presenting different content or URLs to search engines than what is shown to users, which can lead to penalties from search engines like Google.

When running A/B tests, it’s important that both the control and variation pages are accessible and consistent for both users and search engines. This practice ensures that the integrity of the test is maintained and that your site remains in good standing with search engine algorithms.

For instance, suppose you’re testing two different versions of a product page to see which one leads to higher conversion rates. If you cloak the test by showing Google the original version (Version A) while users see the new variation (Version B), you risk being penalized for deceptive practices.

4.3 Use rel=”canonical” links

Using rel="canonical" links in A/B testing for SEO help to prevent duplicate content issues and ensure that search engines correctly attribute authority to the preferred version of a page.

The rel="canonical" tag is a piece of HTML code that you can place in the head section of your web pages to indicate the original version of the content.

When conducting A/B tests, you might have multiple variations of a page, and search engines can interpret these variations as duplicate content, which can affect the SEO value.

For instance, if you’re testing two different versions of a landing page (Version A and Version B), you should add a rel="canonical" tag on the test pages pointing to the original version of the page. If Version A is the original, both Version A and Version B should have a rel="canonical" tag that points to Version A.

This practice tells search engines that Version A is the authoritative page, thereby consolidating all SEO signals to this primary URL.

Refer to our dedicated tutorial on canonical URLs to implement and understand the canonical tags.

4.4 Use 302 Redirects, Not 301 Redirects

Using 302 redirects instead of 301 redirects for A/B testing in SEO is important because 302 redirects are temporary and signal to search engines that the redirect is not permanent.

For instance, suppose you’re running an A/B test to compare two different versions of your homepage. If you use a 301 redirect from the original homepage (Version A) to the test version (Version B), search engines will treat this as a permanent change. They will transfer the ranking power and indexing to Version B, which can affect the SEO performance of your original page.

On the other hand, using a 302 redirect indicates that the change is temporary. Search engines will maintain the original URL in their index while still allowing users to experience Version B.

By using 302 redirects, you can effectively conduct your A/B tests without compromising the long-term SEO value of your original page.

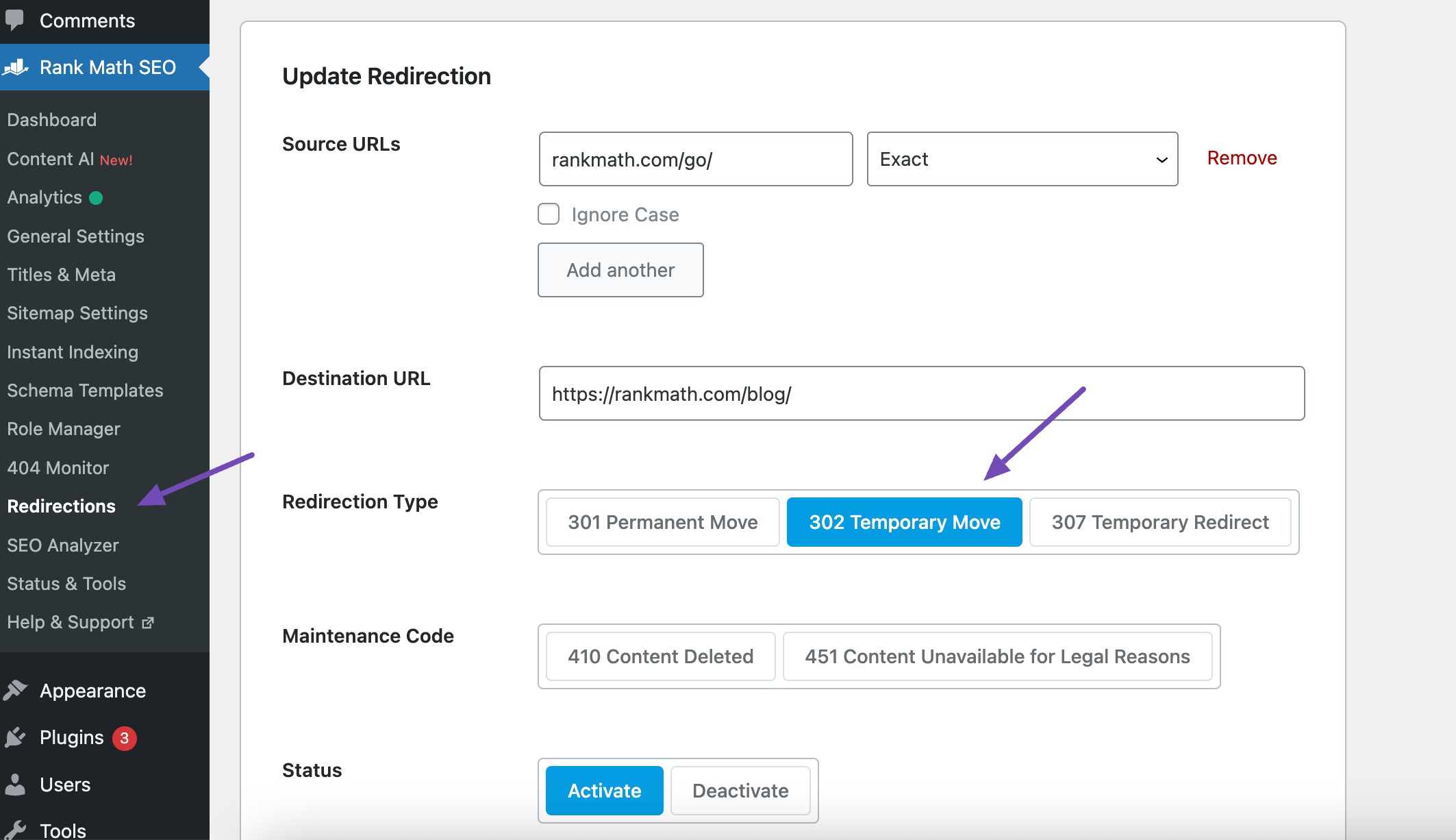

With Rank Math, you can easily create 302 redirects.

To do so, navigate to Rank Math SEO → Redirections module. Next, click on Add New to create a new redirection.

Add the Source URLs and the Destination URL and select the 302 Temporary Move redirect, as shown below.

This approach ensures that once the test is concluded and you’ve determined the winning version, you can either make the change permanent (and then potentially use a 301 redirect) or revert to the original version without having disrupted your site’s SEO standing.

4.5 Run the Experiment Only as Long as Necessary

Running an experiment only as long as necessary in A/B testing for SEO is essential to obtain accurate results without causing unnecessary disruptions.

The duration of an A/B test should be long enough to collect sufficient data to reach statistical significance but not so long that it introduces external factors that can skew the results.

For instance, if you’re testing two different versions of a product page to see which one leads to higher conversion rates, you need to ensure that the test runs long enough to gather enough data points. This means running the test for a few weeks to account for variations in user behaviour across different days of the week and traffic levels.

However, if the test runs too long, external factors such as seasonal changes, marketing campaigns, or algorithm updates can influence the results, making it difficult to isolate the impact of the changes being tested.

By carefully planning the test duration, you can balance the need for reliable data with the need to minimize potential confounding variables.

5 Common A/B Testing Mistakes

Let us now discuss the common mistakes that you can avoid during A/B testing.

5.1 Testing Too Many Variables Simultaneously

Testing too many variables simultaneously is a common mistake in A/B testing that can significantly compromise the clarity and usefulness of the results.

For instance, if you test a new headline, Start Your Online Store Today vs Launch Your Business with Ease, alongside a redesigned call-to-action button, Get Started vs Try for Free and see a significant increase in conversions, it becomes challenging to determine which specific change drove the improvement.

This lack of clarity makes it difficult to draw actionable insights from the test, as you cannot attribute the success or failure to a single variable. Consequently, you can miss valuable opportunities to optimize individual components of your webpage.

To avoid this mistake, it’s essential to isolate each variable and test them independently. By changing only one element at a time, such as the headline in one test and the call-to-action button in another, you can accurately measure the impact of each change and make more informed decisions about which elements drive the best results.

5.2 Not Giving Your Tests Enough Time to Run

A/B tests need adequate time to collect sufficient data and account for variations in user behaviour over different periods.

If you end a test too soon, you may not capture a representative sample of your audience, leading to results that don’t reflect the true performance of the changes being tested.

For instance, running a test for just a few days might not provide enough data to account for weekly patterns in user behaviour or external factors such as holidays or marketing campaigns that can temporarily influence traffic and engagement.

To avoid this mistake, it’s essential to determine an appropriate duration for your test based on your site’s traffic and the statistical significance required.

5.3 Neglecting Seasonality and External Factors

Seasonality refers to predictable fluctuations in user behaviour related to specific times of the year, such as holidays, back-to-school periods, or summer vacations.

External factors include events or trends impacting user behaviour, such as major news events, economic changes, or new competitor activities.

When you fail to account for these variables, you risk attributing changes in user behaviour to the elements you’re testing rather than the external influences.

For instance, if you conduct an A/B test on a retail website during the holiday season, the increase in traffic and conversions might be due to seasonal shopping trends rather than the changes you implemented.

To avoid these risks, it’s important to plan your tests around known seasonal trends and monitor for any significant external factors that might influence the results.

If an unexpected external event occurs during a test, consider pausing the test or extending its duration to ensure you collect enough data from a more stable period.

5.4 Focusing Solely on Positive Outcomes

When you only pay attention to tests that yield favourable results, you miss out on valuable insights that can be gained from negative or neutral outcomes.

These outcomes are equally important as they help you understand what doesn’t work, prevent you from repeating the same mistakes, and prevent you from wasting resources on ineffective strategies.

Additionally, focusing solely on positive outcomes can create a false sense of progress and lead to overconfidence in your testing approach.

It’s important to analyze all test results, including those that are negative or inconclusive, to gain a comprehensive understanding of user behaviour and preferences.

5.5 Misinterpreting Results

Misinterpreting results is another common mistake in A/B testing that can lead to incorrect conclusions and misguided decisions.

This error often arises from a misunderstanding of statistical significance or the impact of external variables.

When results are misinterpreted, the changes implemented based on these flawed insights can negatively affect user experience and overall performance.

It’s important to have a solid understanding of statistical principles and ensure that your tests reach statistical significance to avoid misinterpreting results.

5.6 Not Accounting for User Experience

Not accounting for user experience in A/B testing can undermine the effectiveness of your optimization efforts.

Focusing solely on quantitative metrics such as conversion rates or click-through rates without considering the overall user experience can lead to changes that improve short-term metrics but harm long-term user satisfaction and engagement.

For instance, an A/B test might show that a more aggressive pop-up increases email sign-ups. However, if the pop-up is intrusive and disrupts the user experience, it can lead to higher bounce rates, lower time spent on the site, and ultimately damage the brand’s reputation. The audience might find the site annoying or frustrating, which can reduce repeat visits and customer loyalty.

To avoid this mistake, it’s important to balance quantitative data with qualitative insights. Conduct user surveys and usability tests and gather feedback to understand how changes impact user perception and behaviour. Ensure that any A/B testing strategy includes measures of user satisfaction and experience alongside traditional performance metrics.

5.7 Overlooking Audience Segmentation

When tests are conducted on a broad, undifferentiated audience, the results may not accurately reflect the preferences or behaviours of specific user segments, leading to generalized insights that might not be effective for all users.

Moreover, different segments of your audience may have varying needs and behaviours. A feature that works well for one segment might not be as effective for another.

For instance, younger audiences might prefer a more interactive and visually appealing interface, while older audiences might value simplicity and ease of navigation. By failing to segment the audience, you risk making changes that do not align with these distinct preferences, resulting in a less personalized and effective user experience.

To avoid this mistake, it’s important to identify and analyze the relevant segments of your audience. By doing so, you can gain more precise and actionable insights, ensuring that the changes you implement enhance the experience for all user groups and lead to more effective and targeted optimizations.

5.8 Inconsistent Implementation

Inconsistent implementation is a common A/B testing mistake that can lead to unreliable results and misguided decisions. This error occurs when the tested variations are not applied consistently across all users or when the testing conditions are not uniformly maintained throughout the test duration.

Such inconsistencies can introduce biases, making it difficult to determine the true impact of the changes being tested.

For example, if you are testing a new homepage layout but only some visitors see the new version while others still see the old version due to a technical glitch, the test results will be affected.

Another aspect of inconsistent implementation is failing to ensure that all test elements are identical except for the tested variable. For instance, if you are testing two different call-to-action buttons but other elements like the page load speed or the surrounding content differ between the two versions, it becomes challenging to attribute the results solely to the button changes.

To avoid inconsistent implementation, it’s essential to use reliable A/B testing tools that ensure proper randomization and consistent delivery of test variations. Additionally, thorough testing and quality assurance processes should be in place to identify and fix any issues before the test goes live.

6 Conclusion

A/B testing in SEO is essential for driving meaningful improvements to your website’s performance and user experience.

By carefully designing your tests, focusing on key elements such as title tags, meta descriptions, and content layouts, and avoiding common mistakes like inconsistent implementation and neglecting audience segmentation, you can obtain valuable insights that lead to data-driven decisions.

Remember that successful A/B testing requires a balanced approach. Combine quantitative metrics with qualitative user feedback to ensure that your optimizations boost performance and enhance overall user satisfaction.

If you like this post, let us know by Tweeting @rankmathseo.

![YMYL Websites: SEO & EEAT Tips [Lumar Podcast] YMYL Websites: SEO & EEAT Tips [Lumar Podcast]](https://www.lumar.io/wp-content/uploads/2024/11/thumb-Lumar-HFD-Podcast-Episode-6-YMYL-Websites-SEO-EEAT-blue-1024x503.png)