Many are aware of the popular Chain of Thoughts (CoT) method of prompting generative AI in order to obtain better and more sophisticated responses. Researchers from Google DeepMind and Princeton University developed an improved prompting strategy called Tree of Thoughts (ToT) that takes prompting to a higher level of results, unlocking more sophisticated reasoning methods and better outputs.

The researchers explain:

“We show how deliberate search in trees of thoughts (ToT) produces better results, and more importantly, interesting and promising new ways to use language ****** to solve problems requiring search or planning.”

Researchers Compare Against Three Kinds Of Prompting

The research paper compares ToT against three other prompting strategies.

1. Input-output (IO) Prompting

This is basically giving the language model a problem to solve and getting the answer.

An example based on text summarization is:

Input Prompt: Summarize the following article.

Output Prompt: Summary based on the article that was input

2. Chain Of Thought Prompting

This form of prompting is where a language model is guided to generate coherent and connected responses by encouraging it to follow a logical sequence of thoughts. Chain-of-Thought (CoT) Prompting is a way of guiding a language model through the intermediate reasoning steps to solve problems.

Chain Of Thought Prompting Example:

Question: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?

Reasoning: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer: 11Question: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?

3. Self-consistency with CoT

In simple terms, this is a prompting strategy of prompting the language model multiple times then choosing the most commonly arrived at answer.

The research paper on Sel-consistency with CoT from March 2023 explains it:

“It first samples a diverse set of reasoning paths instead of only taking the greedy one, and then selects the most consistent answer by marginalizing out the sampled reasoning paths. Self-consistency leverages the intuition that a complex reasoning problem typically admits multiple different ways of thinking leading to its unique correct answer.”

Dual Process ****** in Human Cognition

The researchers take inspiration from a theory of how human decision thinking called dual process ****** in human cognition or dual process theory.

Dual process ****** in human cognition proposes that humans engage in two kinds of decision-making processes, one that is intuitive and fast and another that is more deliberative and slower.

- Fast, Automatic, Unconscious

This mode involves fast, automatic, and unconscious thinking that’s often said to be based on intuition. - Slow, Deliberate, Conscious

This mode of decision-making is a slow, deliberate, and conscious thinking process that involves careful consideration, analysis, and step by step reasoning before settling on a final decision.

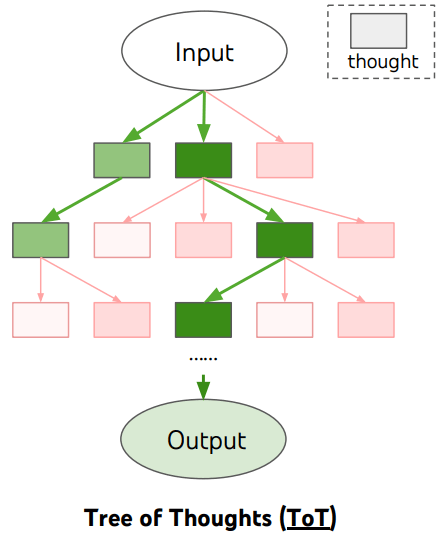

The Tree of Thoughts (ToT) prompting framework uses a tree structure of each step of the reasoning process that allows the language model to evaluate each reasoning step and decide whether or not that step in the reasoning is viable and lead to an answer. If the language model decides that the reasoning path will not lead to an answer the prompting strategy requires it to abandon that path (or branch) and keep moving forward with another branch, until it reaches the final result.

Tree Of Thoughts (ToT) Versus Chain of Thoughts (CoT)

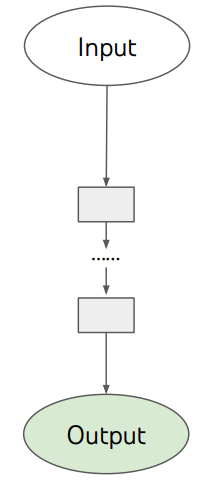

The difference between ToT and and CoT is that ToT is has a tree and branch framework for the reasoning process whereas CoT takes a more linear path.

In simple terms, CoT tells the language model to follow a series of steps in order to accomplish a task, which resembles the system 1 cognitive model that is fast and automatic.

ToT resembles the system 2 cognitive model that is more deliberative and tells the language model to follow a series of steps but to also have an evaluator step in and review each step and if it’s a good step to keep going and if not to stop and follow another path.

Illustrations Of Prompting Strategies

The research paper published schematic illustrations of each prompting strategy, with rectangular boxes that represent a “thought” within each step toward completing the task, solving a problem.

The following is a screenshot of what the reasoning process for ToT looks like:

Illustration of Chain of Though Prompting

This is the schematic illustration for CoT, showing how the thought process is more of a straight path (linear):

The research paper explains:

“Research on human problem-solving suggests that people search through a combinatorial problem space – a tree where the nodes represent partial solutions, and the branches correspond to operators

that modify them. Which branch to take is determined by heuristics that help to navigate the problem-space and guide the problem-solver towards a solution.This perspective highlights two key shortcomings of existing approaches that use LMs to solve general problems:

1) Locally, they do not explore different continuations within a thought process – the branches of the tree.

2) Globally, they do not incorporate any type of planning, lookahead, or backtracking to help evaluate these different options – the kind of heuristic-guided search that seems characteristic of human problem-solving.

To address these shortcomings, we introduce Tree of Thoughts (ToT), a paradigm that allows LMs to explore multiple reasoning paths over thoughts…”

Tested With A Mathematical Game

The researchers tested the method using a Game of 24 math game. Game of 24 is a mathematical card game where players use four numbers (that can only be used once) from a set of cards to combine them using basic arithmetic (addition, subtraction, multiplication, and division) to achieve a result of 24.

Results and Conclusions

The researchers tested the ToT prompting strategy against the three other approaches and found that it produced consistently better results.

However they also note that ToT may not be necessary for completing tasks that GPT-4 already does well at.

They conclude:

“The associative “System 1” of LMs can be beneficially augmented by a “System 2″ based on searching a tree of possible paths to the solution to a problem.

The Tree of Thoughts framework provides a way to translate classical insights about problem-solving into actionable methods for contemporary LMs.

At the same time, LMs address a weakness of these classical methods, providing a way to solve complex problems that are not easily formalized, such as creative

writing.We see this intersection of LMs with classical approaches to AI as an exciting direction.”

Read the original research paper:

Tree of Thoughts: Deliberate Problem Solving with Large Language ******

Featured Image by Shutterstock/Asier Romero